Happy Tuesday!

NVIDIA is about to write one of the largest checks in AI history; a Toronto startup unveiled a chip that permanently embeds model weights in transistors; and quantum networking just hit a 10,000x milestone on the streets of New York City.

Here's what to expect in this week's newsletter:

Spotlights: Nvidia nears a ~$30B equity stake in OpenAI's record mega-round, Taalas exits stealth with $169M and a radical inference chip claiming 73x faster than H200, and Qunnect and Cisco demonstrate 10,000× faster quantum entanglement swapping over NYC fiber

Funding News: $200M+ for solid-state transformers, a new quantum VC fund, and fresh rounds across AI chips, photonics, and power delivery

Bonus: Why solid-state transformers are the unexpected picks-and-shovels play of the AI buildout

Spotlights

(Credit: Nvidia)

NVIDIA is said to finalize a direct ~$30B equity stake in OpenAI, replacing an earlier $100B infrastructure partnership that never progressed beyond a letter of intent. The investment is part of OpenAI's record mega-round expected to exceed $100B total, valuing the company at roughly $830B. SoftBank and Amazon are also expected participants.

The deal marks a strategic pivot. Rather than tying investment to infrastructure milestones, NVIDIA gains immediate equity in what CEO Jensen Huang called "one of the most consequential companies of our time". OpenAI is expected to reinvest much of the capital into NVIDIA hardware.

The circular economics here are hard to ignore: NVIDIA funds OpenAI, which spends the money buying NVIDIA chips. Some analysts call it vendor financing; others call it locking in the most important customer relationship in AI. Either way, it's the single largest chip-company-to-AI-lab investment in history, and it arrives three days before NVIDIA’s Q4 FY2026 earnings on February 25.

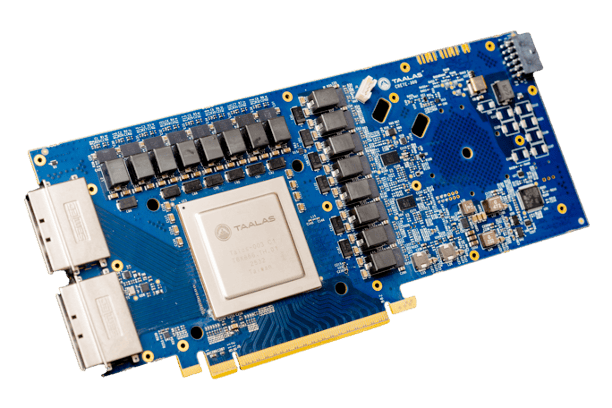

(Credit: Taalas)

Toronto-based Taalas emerged from stealth on February 19 with $169M in funding ($219M total) and a genuinely unconventional approach to AI inference: its HC1 chip "prints" model weights directly into transistors using mask ROM, rather than loading them into memory at runtime.

The result, according to the company: 73x more tokens per second than NVIDIA's H200 on Llama 3.1 8B, at one-tenth the power. By eliminating the memory bottleneck entirely — by physically etching weights into the chip — Taalas sidesteps the HBM shortage strangling competitors. The tradeoff: each chip is model-specific, meaning a new model requires a new chip.

Founded by ex-Tenstorrent CEO Ljubisa Bajic, Taalas claims its foundry-optimized workflow with TSMC can deliver a custom chip from model weights to deployed inference card in two months. Backed by Quiet Capital, Fidelity, and veteran semiconductor investor Pierre Lamond. A chip targeting frontier-class models is planned for end of 2026.

(Credit: Quantum Zeitgeist)

Qunnect and Cisco demonstrated the first metro-scale quantum entanglement swapping over 17.6 km of deployed commercial fiber in New York City. The system achieved 1.7 million entangled pairs per hour locally and 5,400 pairs per hour over deployed fiber — nearly 10,000x better than previous benchmarks — with greater than 99% polarization fidelity.

What makes this notable beyond the raw numbers is the hardware: Qunnect's room-temperature endpoints and Cisco's orchestration software running on existing telecom infrastructure. No cryogenics in the field. No dedicated dark fiber. The quantum data coexists with classical internet traffic.

Days earlier, Photonic Inc. and TELUS achieved quantum teleportation over 30 km of commercial fiber in Canada, and Deutsche Telekom replicated similar results in Berlin. Three countries, three demonstrations, all on production fiber.

Headlines

Semiconductors

Jensen Huang teases "mystery chips the world has never seen" for GTC 2026 (March 16-19)

NVIDIA secures multigenerational Meta deal for millions of GPUs, including Grace standalone CPUs, covering a major slice of Meta's $115-135B 2026 capex

AMD denies MI455X delay reports as "total BS", says Helios rack-scale systems remain on target for H2 2026

Samsung and SK Hynix accelerate memory fab construction amid 60% fulfillment crisis; DRAM contract prices surging 90-95% QoQ

SCOTUS strikes down IEEPA tariffs in landmark 6-3 ruling; 25% semiconductor Section 232 tariffs survive under separate authority

Quantum

Pasqal delivers Italy's first neutral atom quantum computer (140 qubits) at CINECA supercomputing center under EuroHPC

Norwegian scientists discover potential triplet superconductor (NbRe) that could dramatically stabilize quantum computers

First single-shot Majorana qubit readout achieved by QuTech/CSIC, confirming topological qubit viability

NSF launches $100M quantum and nanotech infrastructure program with up to 16 open-access facilities

Data Centers and Cloud

EnCharge AI launches EN100, the first commercial analog in-memory AI accelerator at 20x performance per watt

Neuromorphic and Photonics

Sandia: neuromorphic computers solve partial differential equations, published in Nature Machine Intelligence

UT San Antonio launches THOR, the nation's first open-access neuromorphic computing hub

Funding News

A strong week for compute infrastructure. Two solid-state transformer startups raised a combined $200M on the same day (see Bonus below), while AI inference silicon, quantum networking, and power delivery all drew significant capital.

Amount | Name | Round | Category |

|---|---|---|---|

$169M | AI Inference Chips | ||

$140M | DC Power Infrastructure | ||

$60M | DC Power Infrastructure | ||

$50M | AI for Chip Design | ||

$50M | Photonics/ DC Networking | ||

$220M | Quantum VC Fund | ||

$15M | Quantum Networking | ||

$15M | Power Delivery | ||

$10M | Analog Semiconductors | ||

$6M | Quantum Computing |

Also notable: Nscale signed a $1.4B GPU-backed debt facility to finance their European AI cluster deployment.

Bonus: The Most Boring Part of a Data Center Just Became a $200M Startup Category

(Credit: Heron Power)

Here's something nobody talks about when discussing the AI infrastructure buildout: the transformer. Not the neural network architecture — the actual, physical electrical transformer that sits between the power grid and every server rack.

These devices, based on technology largely unchanged since the 1890s, convert high-voltage utility power into the low-voltage DC that chips need. They're massive, heavy, inefficient, and increasingly the bottleneck holding back data center deployment timelines. Lead times for large power transformers can stretch to three years or more.

On February 18, two startups raised a combined $200M to replace them.

Heron Power closed a $140M Series B led by a16z and Breakthrough Energy Ventures to scale its solid-state transformer, "Heron Link." Each unit handles 5 MW and converts medium-voltage AC directly to the 800V DC that Nvidia's latest rack designs need -- eliminating roughly 70% of the legacy power conversion equipment in a data center. The company reports more than 40 GW in customer inquiries. It was founded by Drew Baglino, Tesla's former SVP of Powertrain and Energy.

The same day, DG Matrix raised a $60M Series A backed by ABB. Its "Interport" platform routes power from multiple sources at different voltages — useful for data centers mixing grid, solar, battery, and generator inputs.

What makes this interesting beyond the hardware is what it signals about the AI buildout's maturation. For three years, the bottleneck narrative has centered on GPUs, then HBM, then energy generation. Now it's reaching the mundane, physical layer: the power conversion equipment between the grid and the chip. GPUs are useless if you can't deliver clean power to them fast enough.

It's the ultimate picks-and-shovels play. Boring technology and an enormous market.